MOOCs are a problematic concept, as are many things in education. Using bots to replicate various functions in MOOCs is also a problematic concept. Both MOOCs and bots seems to go in the opposite direction of what we know works in education (smaller class sizes and more interaction with humans). So, teaching with either or both concepts will open the doors for many different sets of problems.

However… there are also even bigger problems that our society is imposing on education (at least in some parts of the world): defunding of schools, lack of resources, and eroding public trust being just a few. I don’t like any of those, and I will continue to speak out against them. But I also can’t change them overnight.

So what do we do with the problems of less resources, less teachers, more students, and more information to teach as the world gets more complex? Some people like to just focus on fixing the systemic issues causing these problems. And we need those people. But even once they do start making headway…. it will still be years before education improves from where it is. And how long until we even start making headway?

The current state of research into MOOCs and/or bots is really about dealing with the reality of where education is right now. Despite there being some larger, well-funded research projects into both, the reality is that most research is very low (or no) budget attempts to learn something about how to create some “thing” that can help a shrinking pool of teachers educate a growing mass of students. Imperfect solutions for an imperfect society. I don’t fully like it, but I can’t ignore it.

Unfortunately, many people are causing an unnecessary either/or conflict between “dealing with scale as it is now” and “fixing the system that caused the scale in the first place.” We can work at both – help education scale now, while pushing for policy and culture change to better support and fund education as a whole.

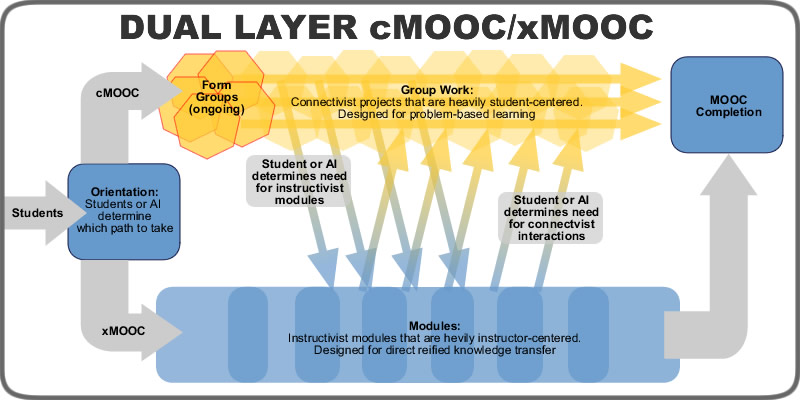

On top of all of that, MOOCs tend to be “massively” misunderstood (sorry, couldn’t resist that one). Despite what the hype claims, they weren’t created as a means to scale or democratize education. The first MOOCs were really about connectivism, autonomy, learner choices, and self-directed learning. The fact that they had thousands of learners in them was just a thing that happened due to the openness, not an intended feature.

Then the “second wave” of MOOCs came along, and that all changed. A lot of this was due to some unfortunate hype around MOOCs published in national publications that proclaimed some kind of “educational utopia” of the future, where MOOCs would “democratize” education and bring quality online learning to all people.

Most MOOC researchers just scoffed at that idea – and they still do. However, they also couldn’t ignore the fact that MOOCs do bring about scaled education in various ways, even if that was not the intention. So that is where we are at now: if you are going to research MOOCs, you have to realize that the context of that research will be about scale and autonomy in some way.

But it seems that the misunderstandings of MOOCs are hard-coded into the discourse now. Take the recent article “Not Even Teacher-Bots Will Save Massive Open Online Courses” by Derek Newton. Of course, open education and large courses existed long before that were coined “MOOCs”… so it is unclear what needs “saving” here, or what it needs to be saved from. But the article is a critique of a study out of the University of Edinburgh (I believe this is the study, even though Newton never links to it for you to read it for yourself) that sought “to increase engagement” by designing and deploying “a teacher-bot (botteacher) in at least one MOOC.” Newton then turns around and says “the idea that a pre-programmed bot could take over some teaching duties is troubling in Blade Runner kind of way.” Right there you have your first problematic switch-a-roo. “Increasing engagement” is not the same as “taking over some teaching duties.” That is like saying that lane departure warning lights on cars is the same as taking over some driving duties. You can’t conflate something that assists with something that takes over. Your car will crash if you think “lane departure warnings” are “self-driving cars.”

But the crux of Newton’s article is that because the “bot-assisted platform pulls in just 423 of 10,145, it’s fair to say there may be an engagement problem…. Botty probably deserves some credit for teaching us, once again, that MOOCs are fatally flawed and that questions about them are no longer serious or open.” Of course, there are fatal flaws in all of our current systems – political, religious, educational, etc. – yet questions about all of those can still be serious or open. So you kind of have to toss out that last part as opinion and not logic.

The bigger issues is that calling 423 people an “engagement problem” is an unfortunate way to look at education. That is still a lot of people, considering most courses at any level can’t engage 30 students. But this misunderstanding comes from the fact that many people still misunderstand what MOOC enrollment means. 10,000 people signing up for a MOOC is not the same as 10,000 people signing up for a typical college course. Colleges advertise to millions of perspective students, who then have to go through a huge process of applications and trials to even get to register for a course. ALL of that is bypassed for a MOOC. You see a course and click to register. Done. If colleges did the same, they would also get 10,000+ signing up for a course. But they would probably only get 50-100 showing up for the first class – a lot less than any first week in most MOOCs.

Make no mistake: college courses would have just as bad of engagement rates if they removed the filters of application and enrollment to who could sign up. Additionally, the requirement of “physical re-location” for most would make those engagement rates even worse than MOOCs if the entire process were considered.

Look at it this way: 30 years ago, if someone said “I want to learn History beyond what a book at the library can tell me,” they would have to go through a long and expensive process of applying to various universities, finally (possibly) getting accepted at one, and then moving to where that University was physically located. Then, they would have to pay hundreds or thousands of dollars for that first course. How many tens of thousands of possible students get filtered out of the process because of all of that? With MOOCs, all of that is bypassed. Find a course on History, click to enroll, and you are done.

When we talk about “engagement” in courses, it is typically situated in a traditional context that filters out tens of thousands of people before the course even starts. To then transfer the same terminology to MOOCs is to utilize an inaccurate critique based on concepts rooted in a completely different filtering mechanism.

Unfortunately, these fundamentally flawed misunderstandings of MOOC research are not just one-off rarities. This same author also took a problematic look at a study I helped out with Aras Bozkurt and Whitney Kilgore. Just look at the title or Newton’s previous article: Are Teachers About To Be Replaced By Bots? Yeah, we didn’t even go that far, and intentionally made sure to stay as far away from saying that as possible.

Some of the critique of our work by Newton is just very weird, like where he says: “Setting aside existential questions such as whether lines of code can search, find, utter, reply or engage discussions.” Well, yes – they can do that. Its not really an existential question at all. Its a question of “come sit at a computer with me and I will show you that a bot is doing all of that.” Google has had bots doing this for a long, long time. We have pretty much proven that Russian bots are doing this all over the world.

Then Newton gets into pull quotes, where I think he misunderstands what we meant by the word “fulfill.” For example, it seems Newton misunderstood this quote from our article: “it appears that Botty mainly fulfils the facilitating discourse category of teaching presence.” If you read our quote in context, it is part of the Findings and Discussion section, where we are discussing what the bot actually did. But it is clear from the discussion that we don’t mean that Botty “fully fills” the discourse category, but that what it does “fully qualifies” as being in that category. Our point was in light of “self-directed and self-regulated learners in connectivist learning environments” – a context where learners probably would not engage with the instructor in the first place. In this context, yes it did seem that Botty was filling in for an important instructor role in a way that fills satisfies the parameters of that category. Not perfectly, and not in a way that replaces the teacher. It was in a context where the teacher wasn’t able to be present due to the realities of where education is currently in society – scaled and less supported.

Newton goes on to say: “What that really means is that these researchers believe that a bot can replace at least one of the three essential functions of teaching in a way that’s better than having a human teacher.”

Sorry, we didn’t say “replace” in an overall context, only “replace” in a specific context that is outside of the teacher’s reach. We also never said “better than having a human teacher.” That part is just a shameful attempt at putting words in our mouths that we never said. In fact, you can search the entire article and find we never said the word “better” about anything.

Then Newton goes on to mis-use another quote of ours (“new technological advances would not replace teachers just because teachers are problematic or lacking in ability, but would be used to augment and assist teachers”). His response to this is to say that we think “new technology would not replace teachers just because they are bad but, presumably, for other reasons entirely.”

Sorry, Newton, but did you not read the sentence directly after the one you quoted? We said “The ultimate goal would not be to replace teachers with technology, but to create ways for non-human teachers to work in conjunction with human teachers in ways that remove all ontological hierarchies.” Not replacing teachers…. working in conjunction. Huge difference.

Newton continues with injecting inaccurate ideas into the discussion, such as “Bots are also almost certain to be less expensive than actual teachers too.” Well, actually, they currently aren’t always less expensive in the long run. Then he tries to connect another quote from us about how lines between bots and teachers might get blurred as proof that we… think they will cost less? That part just doesn’t make sense.

Newton also did not take time to understand what we meant by “post-humanist,” as evidenced by this statement of his: “the analysis of Botty was done, by design, through a “post-humanist” lens through which human and computer are viewed as equal, simply an engagement from one thing to another without value assessment.” Contrast his statement with our actual statement on post-humanism: “Bayne posits that educators can essentially explore how to retain the value of teacher presence in ways that are not in opposition to some forms of automation.” Right there we clearly state that humans still maintain value in our study context.

Then Newton pulls his most shameful bait and switch of the whole article at the end: pulling one of our “problematic questions” (where we intentionally highlighted problematic questions for sake of critique) and attributing it as our conclusion: “the role of the human becomes more and more diminished.” Newton then goes on to state: “By human, they mean teacher. And by diminished, they mean irrelevant.”

Sorry Newton, that is simply not true. Look at our question following soon after that one, where we start the question with “or” to negate what our list of problematic questions ask: “Or should AI developers maintain a strong post-humanist angle and create bot-teachers that enhance education while not becoming indistinguishable from humans?” Then, maybe read our conclusion after all of that and the points it makes, like “bot-teachers can possibly be viewed as a learning assistant on the side.”

The whole point of our article was to say: “Don’t replace human teachers with bot teachers. Research how people mistake bots for real people and fix that problem with the bots. Use bots to help in places where teachers can’t reach. But above all, keep the humans at the center of education.”

![]() Anyways, after a long side-tangent about our article, back to the point of misunderstanding MOOCs, and how researchers of MOOCs view MOOCs. You can’t evaluate research about a topic – whether MOOCs or bots or post-humanism or any topic – through a lens that fundamentally misunderstands what the researchers were examining in the first place. All of these topics have flaws and concerns, and we need to critically think about them. But we have to do so through the correct lens and contextual understanding, or else we will cause more problems that we solve in the long run.

Anyways, after a long side-tangent about our article, back to the point of misunderstanding MOOCs, and how researchers of MOOCs view MOOCs. You can’t evaluate research about a topic – whether MOOCs or bots or post-humanism or any topic – through a lens that fundamentally misunderstands what the researchers were examining in the first place. All of these topics have flaws and concerns, and we need to critically think about them. But we have to do so through the correct lens and contextual understanding, or else we will cause more problems that we solve in the long run.

Matt is currently an Instructional Designer II at Orbis Education and a Part-Time Instructor at the University of Texas Rio Grande Valley. Previously he worked as a Learning Innovation Researcher with the UT Arlington LINK Research Lab. His work focuses on learning theory, Heutagogy, and learner agency. Matt holds a Ph.D. in Learning Technologies from the University of North Texas, a Master of Education in Educational Technology from UT Brownsville, and a Bachelors of Science in Education from Baylor University. His research interests include instructional design, learning pathways, sociocultural theory, heutagogy, virtual reality, and open networked learning. He has a background in instructional design and teaching at both the secondary and university levels and has been an active blogger and conference presenter. He also enjoys networking and collaborative efforts involving faculty, students, administration, and anyone involved in the education process.